Press release

AI Inference Chip Market Accelerates Alongside the Broader AI Inference Market as Generative AI, Edge Computing, and Hyperscale Infrastructure Drive Next-Generation Compute Demand

Wilmington, DE, USA, May 2026 - According to MarketGenics Global Research, the global AI Inference Chip Market is projected to expand from USD 13.7 billion in 2025 to USD 56.9 billion by 2035, registering a CAGR of 15.3% during the forecast period as hyperscalers, enterprise AI deployments, generative AI applications, and real-time edge intelligence infrastructure rapidly reshape the broader AI inference market globally.The AI Inference Chip Market is emerging as one of the most strategically important layers within the wider AI inference market ecosystem, where semiconductor companies, cloud providers, AI infrastructure vendors, and enterprise technology firms are racing to optimize inference efficiency for large language models (LLMs), multimodal AI systems, autonomous platforms, industrial AI applications, and edge intelligence environments.

Unlike AI training infrastructure primarily focused on model development, AI inference infrastructure is centered on executing trained AI models at scale with low latency, energy efficiency, high throughput, and cost optimization. This transition is rapidly increasing demand for high-performance AI inference chips capable of supporting trillion-parameter AI workloads across cloud data centers, enterprise AI systems, industrial automation infrastructure, automotive intelligence platforms, and edge AI devices.

The rapid expansion of the broader AI inference market is fundamentally reshaping semiconductor priorities as enterprises increasingly demand scalable inference hardware optimized for generative AI deployment, retrieval-augmented generation (RAG), AI copilots, recommendation engines, AI search systems, cybersecurity analytics, robotics, autonomous systems, and real-time AI-driven decision infrastructure.

Get Sample Copy of the Report: https://marketgenics.co/download-report-sample/ai-inference-chip-market-52961

==============================

Generative AI and Trillion-Parameter Models Reshape AI Inference Infrastructure Demand

==============================

The explosive growth of generative AI ecosystems is becoming the single largest catalyst transforming both the AI inference market and the AI inference chip market globally.

Rapid deployment of:

• large language models (LLMs)

• multimodal AI systems

• enterprise AI copilots

• autonomous AI agents

• generative search infrastructure

• AI coding assistants

• industrial AI platforms

• recommendation engines

• real-time analytics systems

• AI cybersecurity architectures

is significantly increasing demand for inference-optimized semiconductor architectures capable of executing AI workloads with lower latency, reduced energy consumption, and improved throughput efficiency.

Unlike training infrastructure, inference environments require continuous execution efficiency at production scale. As enterprise AI adoption accelerates, semiconductor vendors are increasingly prioritizing:

• inference acceleration architectures

• low-power AI hardware

• transformer inference optimization

• AI accelerator chips

• edge inference silicon

• GPU inference scalability

• ASIC-based AI acceleration

• neural processing units (NPUs)

• tensor processing architectures

• memory-bandwidth optimization

This transition is driving a new infrastructure race across hyperscalers, semiconductor companies, cloud providers, and enterprise AI platform vendors seeking to optimize the economics of large-scale AI inference deployment.

According to MarketGenics Global Research, the AI inference chip market is likely to create an incremental opportunity exceeding USD 43 billion by 2035 as enterprises increasingly prioritize scalable AI inference infrastructure across cloud and edge ecosystems.

==============================

NVIDIA, Intel, AMD, Google, and Qualcomm Intensify Competition Across AI Inference Ecosystems

==============================

The global AI inference chip market remains highly consolidated, with dominant semiconductor and hyperscale technology companies controlling a significant portion of high-performance inference infrastructure deployment globally.

Competitive differentiation is increasingly being defined by:

• GPU inference scalability

• AI accelerator efficiency

• memory bandwidth optimization

• low-latency inference execution

• open software ecosystem integration

• edge AI processing capability

• cloud AI infrastructure compatibility

• energy-efficient AI compute

• multimodal AI acceleration

• hyperscale deployment readiness

• AI software interoperability

• advanced semiconductor packaging

NVIDIA Corporation continues maintaining dominant positioning within the AI inference chip market through its CUDA software ecosystem, TensorRT-LLM infrastructure, advanced GPU architectures, and hyperscale AI deployment leadership.

The company reported approximately USD 60.9 billion in revenue while continuing to expand Blackwell AI infrastructure platforms optimized for large-scale AI inference and trillion-parameter generative AI deployment.

In March 2024, NVIDIA introduced the Blackwell AI Platform integrating GB200 Grace Blackwell Superchips engineered for hyperscale inference environments. The platform reportedly delivers up to 25× lower inference cost and energy consumption compared with Hopper-based infrastructure while supporting trillion-parameter AI models across enterprise and cloud AI deployments.

NVIDIA additionally unveiled the DGX SuperPOD powered by up to 576 Blackwell GPUs delivering up to 15× faster real-time inference for large-scale generative AI infrastructure environments supporting enterprise and hyperscale AI ecosystems globally.

Intel Corporation remains one of the most influential challengers within the broader AI inference market through Habana Gaudi accelerators, Xeon AI infrastructure, oneAPI software ecosystems, and edge-AI processing architectures optimized for enterprise inference deployment.

The company reported approximately USD 53.1 billion in revenue while aggressively expanding AI inference infrastructure across enterprise, cloud, and industrial AI environments.

In April 2024, Intel introduced the Gaudi 3 AI Accelerator delivering 4× higher BF16 compute performance, 1.5× greater memory bandwidth, and 2× networking bandwidth improvements compared with Gaudi 2, substantially improving inference efficiency for large language models and multimodal AI systems.

Advanced Micro Devices (AMD) continues strengthening competitive positioning through Instinct MI300X accelerators, ROCm open software ecosystems, FPGA integration capabilities acquired through Xilinx, and high-performance AI accelerator architectures supporting enterprise AI workloads.

In May 2024, AMD accelerated deployment of Microsoft Azure OpenAI Service workloads using Instinct MI300X accelerators supporting GPT-3.5 and GPT-4 inference while improving price-performance economics for open AI infrastructure ecosystems.

Google LLC continues expanding TPU-based AI inference infrastructure across cloud AI environments.

In April 2025, Google introduced Ironwood, its seventh-generation TPU architecture purpose-built exclusively for AI inference workloads. Ironwood reportedly scales to 9,216 chips while delivering 42.5 exaflops of compute for large-scale AI inference environments.

Qualcomm Technologies is increasingly differentiating through Snapdragon AI platforms, Hexagon NPUs, and edge AI inference architectures optimized for smartphones, automotive intelligence systems, IoT infrastructure, and on-device generative AI processing.

In January 2025, Qualcomm announced the Qualcomm AI On-Prem Appliance Solution enabling enterprises to deploy generative AI inference infrastructure locally instead of relying exclusively on cloud-based AI environments.

==============================

GPU Architectures Continue Dominating the AI Inference Chip Market

==============================

Graphics Processing Units (GPUs) currently account for approximately 54% of the global AI inference chip market due to their massive parallel processing capability and mature AI software ecosystem supporting deep learning and generative AI workloads.

GPU dominance remains strongly supported by:

• parallel compute scalability

• mature AI development ecosystems

• CUDA acceleration capability

• transformer model optimization

• hyperscale cloud compatibility

• high-throughput inference processing

• generative AI workload acceleration

• multimodal AI execution efficiency

Microsoft Azure, AWS, Google Cloud, and other hyperscale providers increasingly rely on GPU-based inference infrastructure integrating NVIDIA A100, H100, and Blackwell architectures for enterprise-scale AI deployment environments.

Meanwhile, FPGA-based AI inference architectures are gaining traction across latency-sensitive industrial and edge environments requiring customizable low-power inference processing capability.

Application-Specific Integrated Circuits (ASICs), Tensor Processing Units (TPUs), Neural Processing Units (NPUs), and neuromorphic architectures are also witnessing increasing enterprise deployment as organizations seek optimized workload-specific inference acceleration for generative AI and edge intelligence systems.

==============================

Edge AI and On-Device Inference Create New Long-Term Growth Corridors

==============================

The rapid emergence of edge AI infrastructure is substantially expanding the commercial scope of the AI inference chip market beyond centralized cloud data centers.

Growing deployment across:

• autonomous vehicles

• robotics systems

• industrial automation

• smart surveillance

• healthcare diagnostics

• AI smartphones

• smart manufacturing

• retail analytics

• IoT infrastructure

• defense intelligence systems

is significantly increasing demand for low-power AI inference chips capable of supporting localized AI execution with minimal latency and enhanced data privacy.

Modern edge AI ecosystems increasingly require:

• low-power inference acceleration

• on-device AI processing

• real-time analytics capability

• localized inference execution

• efficient thermal performance

• bandwidth optimization

• secure AI inference architectures

This shift toward decentralized AI processing is rapidly expanding addressable demand across automotive intelligence systems, industrial AI infrastructure, healthcare AI devices, and consumer AI ecosystems globally.

Qualcomm's Snapdragon AI architectures and Apple's Neural Engine platforms continue accelerating on-device generative AI capability supporting real-time smartphone inference, intelligent assistants, and contextual AI interaction environments.

Open AI Software Ecosystems Reshape Competitive Dynamics

One of the most important structural transitions within the broader AI inference market is the movement toward open AI software ecosystems reducing dependency on proprietary infrastructure environments.

Enterprises increasingly prefer interoperable AI ecosystems capable of supporting:

multi-vendor AI deployment,

open inference frameworks,

scalable AI orchestration,

and lower infrastructure lock-in risk.

This trend is accelerating adoption of:

• ROCm

• oneAPI

• TensorFlow

• PyTorch

• OpenXLA

• ONNX Runtime

• TensorRT-LLM

• OpenAI-compatible inference frameworks

The transition toward open inference ecosystems is intensifying competition across the AI inference chip market while lowering deployment friction for enterprise AI infrastructure expansion.

AMD's ROCm ecosystem, Intel's oneAPI environment, and Google's TPU software stack increasingly compete against NVIDIA's CUDA dominance as enterprises seek more flexible and cost-efficient AI deployment architectures.

==============================

North America Leads Global AI Inference Chip and AI Inference Market Expansion

==============================

North America currently accounts for approximately 40-45% of the global AI inference chip market and remains the most strategically important region within the broader AI inference market ecosystem globally.

Regional dominance is supported by:

• hyperscale cloud infrastructure

• advanced semiconductor ecosystems

• enterprise AI adoption

• generative AI commercialization

• venture capital concentration

• AI startup ecosystems

• cloud AI platform leadership

• advanced data-center infrastructure

• early enterprise AI integration

The United States continues leading AI inference deployment due to the strong presence of NVIDIA, Intel, AMD, Google, Microsoft, Qualcomm, AWS, and other hyperscale AI infrastructure providers driving rapid commercialization of AI compute ecosystems globally.

Meanwhile, Asia Pacific is rapidly emerging as a major semiconductor manufacturing and edge AI deployment hub due to expanding AI hardware production, smartphone AI integration, automotive intelligence investment, and industrial automation adoption.

==============================

COMPLETE COMPETITIVE LANDSCAPE & KEY PLAYERS

==============================

Major companies operating in the global AI inference chip market include:

• NVIDIA Corporation

• Intel Corporation

• Advanced Micro Devices (AMD)

• Google LLC

• Qualcomm Technologies

• Amazon Web Services

• Apple Inc.

• Microsoft Corporation

• Arm Holdings

• Broadcom Inc.

• Cerebras Systems

• d-Matrix Corporation

• Esperanto Technologies

• Graphcore Limited

• Hailo Technologies Ltd.

• Huawei Technologies

• Marvell Technology

• MediaTek Inc.

• Meta Platforms

• Mythic AI

• SambaNova Systems

• Samsung Electronics

• Taiwan Semiconductor Manufacturing Company (TSMC)

• Tenstorrent Inc.

• Untether AI

• Vastai Technologies

• Other Key Players

==============================

FULL MARKET SEGMENTATION STRUCTURE

==============================

By Compute Type

• Graphics Processing Unit (GPU)

• Central Processing Unit (CPU)

• Field-Programmable Gate Array (FPGA)

• Application-Specific Integrated Circuit (ASIC)

• Neural Processing Unit (NPU)

• Tensor Processing Unit (TPU)

• Vision Processing Unit (VPU)

• Neuromorphic Chips

• Others

By Hardware Form Factor

• Discrete Chip / PCIe Cards

• System-on-Chip (SoC)

• Multi-Chip Module (MCM)

• Chip-on-Wafer-on-Substrate (CoWoS)

• Accelerator Cards / Modules

By Processing Architecture

• Von Neumann Architecture

• Non-Von Neumann Architecture

• Neuromorphic Architecture

• Dataflow Architecture

• In-Memory Computing Architecture

• Hybrid Architecture

• Others

By Memory Type

• HBM

• LPDDR

• GDDR6/GDDR6X

• SRAM-Based

• eDRAM

By Deployment Mode

• Cloud-Based Inference

• On-Premise / Data Center Inference

• Edge Inference

• Edge Server

• Edge Gateway

• Edge Device / End Node

• Hybrid (Cloud + Edge)

By Application

• NLP & LLMs

• Computer Vision & Image Recognition

• Speech Recognition & Synthesis

• Recommendation Systems

• Autonomous Driving & ADAS

• Robotics & Automation

• Generative AI

• Predictive Analytics & Forecasting

• Anomaly Detection & Cybersecurity

• Drug Discovery & Genomics

• Other Applications

By Industry Verticals

• Healthcare & Life Sciences

• Automotive & Transportation

• Consumer Electronics

• IT & Telecommunications

• Retail & E-Commerce

• Banking, Financial Services & Insurance (BFSI)

• Manufacturing & Industrial

• Defense, Aerospace & Government

• Media, Entertainment & Education

• Energy & Utilities

• Agriculture & Precision Farming

• Smart Cities & Infrastructure

• Other Verticals

Access the full report and strategic insights: https://marketgenics.co/reports/ai-inference-chip-market-52961

==============================

RECOMMENDED REPORTS:

==============================

Chiplet Market: https://marketgenics.co/reports/chiplet-market-20933

Power Management IC Market: https://marketgenics.co/reports/power-management-ic-market-15175

Contact:

Mr. Debashish Roy

MarketGenics Global Research

800 N King Street, Suite 304 #4208, Wilmington, DE 19801, United States

USA: +1 (302) 303-2617

Email: sales@marketgenics.co

Website: https://marketgenics.co

About MarketGenics

MarketGenics is a global market research and business advisory firm empowering decision-makers across startups, Fortune 500 companies, non-profit organizations, universities, and government institutions. The company delivers comprehensive market intelligence, industry analysis, and strategic insights across diverse sectors.

MarketGenics publishes detailed industry research reports combining granular quantitative analysis with expert insights on market trends, competitive landscapes, and emerging opportunities. These reports help organizations make informed strategic decisions, identify growth opportunities, and support sustainable business development.

In addition to research publications, MarketGenics supports organizations with strategic insights on product development, application modeling, market expansion strategies, and identifying niche growth opportunities.

This release was published on openPR.

Permanent link to this press release:

Copy

Please set a link in the press area of your homepage to this press release on openPR. openPR disclaims liability for any content contained in this release.

You can edit or delete your press release AI Inference Chip Market Accelerates Alongside the Broader AI Inference Market as Generative AI, Edge Computing, and Hyperscale Infrastructure Drive Next-Generation Compute Demand here

News-ID: 4504542 • Views: …

More Releases from MarketGenics Global Research

Ultra-Wideband Market Accelerating with a CAGR of 19.6% by 2035 Driven by Spatia …

Wilmington, DE, USA, May 2026 - According to MarketGenics Global Research, the global Ultra-wideband Market is projected to grow from USD 2.1 billion in 2025 to USD 12.6 billion by 2035, expanding at a CAGR of 19.6% during the forecast period as ultra-precise positioning, secure spatial awareness, automotive digital key ecosystems, industrial RTLS deployment, and connected device interoperability reshape next-generation wireless infrastructure globally.

The market is entering a transformational phase where…

Global Proximity Sensors Market Advancing Towards USD 11.4 Billion by 2035 Prope …

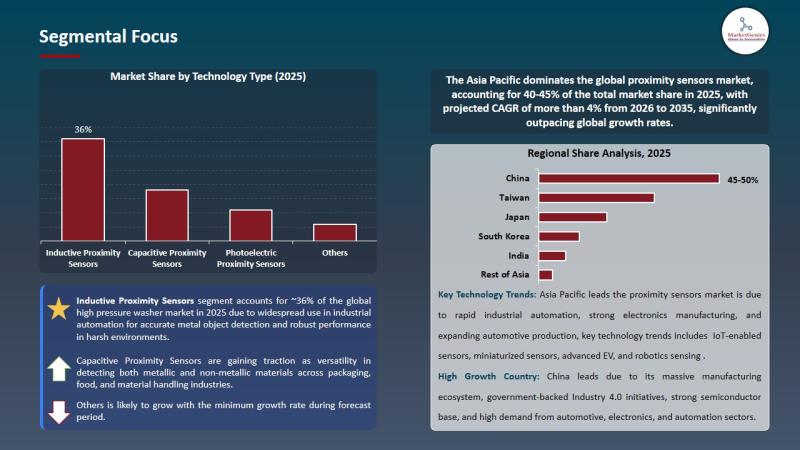

Wilmington, DE, USA, May 2026 - According to MarketGenics Global Research, the global Proximity Sensors Market is projected to expand from USD 6.4 billion in 2025 to USD 11.4 billion by 2035, advancing at a CAGR of 5.9% during the forecast period as manufacturers accelerate investment in intelligent factory automation, industrial robotics, EV production infrastructure, machine vision ecosystems, and IoT-enabled predictive maintenance architectures.

Unlike traditional industrial sensing cycles driven primarily by…

Solid State Relay Market Size to Reach USD 2.5 Billion by 2035 as Industrial Aut …

Wilmington, DE, USA, May 2026 - According to MarketGenics Global Research, the global Solid State Relay Market is valued at USD 1.4 billion in 2025 and is projected to reach USD 2.5 billion by 2035, expanding at a CAGR of 6.1% during the forecast period.

The industry is entering a new phase of intelligent industrial electrification as solid state relays (SSRs) evolve from conventional semiconductor switching components into high-reliability, IoT-enabled, thermally…

Programmable Logic Controller (PLC) Market Size to Reach USD 14.3 Billion by 203 …

Wilmington, DE, USA, May 07, 2026 - According to MarketGenics Global Research, the global Programmable Logic Controller (PLC) Market, also referred to as the PLC Automation Market, is valued at USD 6.2 billion in 2025 and is projected to reach USD 14.3 billion by 2035, expanding at a CAGR of 8.7% during the forecast period.

The global PLC automation industry is entering a new phase of industrial transformation as programmable logic…

More Releases for Inference

General Compute Launches ASIC-First Inference Cloud for Autonomous AI Agents

General Compute today announced its inference cloud platform built for AI agents, working with early partners now ahead of general availability on May 15, 2026. The platform runs on purpose-built AI accelerators rather than general-purpose GPUs. More information is available at generalcompute.com.

SAN FRANCISCO -- April 18, 2026 -- General Compute Inc. today announced its inference cloud platform, which is designed for AI agent workloads. The company is working with early…

NexaStack AI - Unified Inference Platform for any Model, on any Cloud

XenonStack Launches NexaStack AI - Unified Inference Platform for any Model, on any Cloud

XenonStack annoucing launch of Unified Inference Platform, NexaStack AI, that enables organizations to deploy any model on any cloud while maintaining complete data sovereignty and security. The platform is specifically designed for enterprises requiring both the flexibility of Agentic AI and the strict privacy controls demanded by regulated industries.

Your Data. Your Agent. Your…

AI Inference Market Is Booming So Rapidly | Nvidia, Microsoft, IBM

The Global AI Inference Market Size is estimated at $133.8 Billion in 2025 and is forecast to register an annual growth rate (CAGR) of 18.8% to reach $630.7 Billion by 2034.

The latest study released on the Global AI Inference Market by USD Analytics Market evaluates market size, trend, and forecast to 2034. The AI Inference market study covers significant research data and proofs to be a handy resource document for…

AI Inference Server PCB Market Key Innovations 2025-2032

The AI Inference Server PCB market is a rapidly evolving sector that has garnered significant attention due to its integral role in powering artificial intelligence applications across various industries. As the demand for AI-driven solutions continues to surge, the relevance of AI Inference Server PCBs has become increasingly pronounced. These printed circuit boards serve as the backbone of AI inference servers, facilitating the processing of vast amounts of data with…

Youdao (NYSE:DAO) Launches Lightweight Inference Model "Confucius-o1," Achieving …

In 2025, the AI industry has witnessed a surge in the development of large-scale inference models, following OpenAI's release of o1. Various inference models have been emerging, with their high-level reasoning capabilities significantly enhanced and their application value increasingly recognized by the industry.

On January 22, NetEase Youdao officially launched China's first step-by-step exposition inference model, "Confucius-o1." As a 14B lightweight single model, Confucius-o1 supports deployment on consumer-grade GPUs and utilizes…

Best Conceptual Inference of Strategic Brand Management Assignment

The design and implementation of marketing initiatives and programmers to increase, gauge, and communicate brand equity are part of the strategic brand management process. Strategic Brand management involves creating a plan that successfully maintains or increases brand recognition, strengthens brand associations, and emphasizes brand quality and usage. Sign in today with us and get all updates, knowledge and information about strategic brand management along with Strategic Brand Management Assignment Help!

…